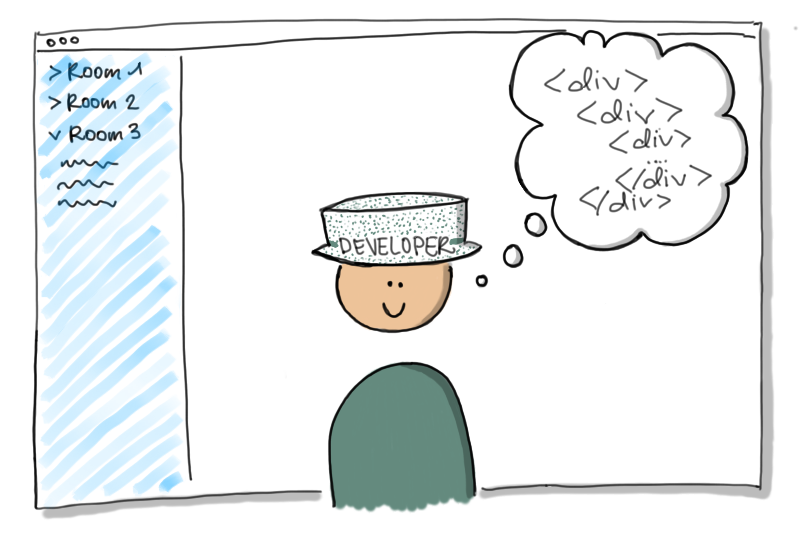

In the past decades, we have pushed the boundaries of what is possible to create on the web platform. If you think about it, it’s quite astounding what web developers have managed to create using mounds of non-semantic <div> elements which were never intended for that purpose. In our quest to represent anything that we want, we’ve decided to use the element which does not inherently represent anything.

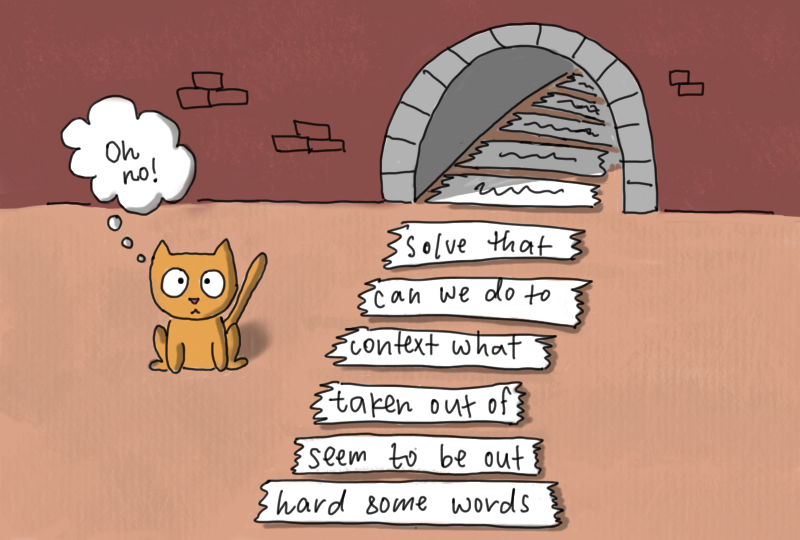

By choosing non-semantic HTML, we’ve removed any contextual information which might be useful for assistive technologies. It’s basically like cutting a book into sentences, removing any page numbers or information about the chapters, and placing them in a line on the floor. To read the book, you then have to walk next to each sentence and read it sequentially (guessing where any paragraph breaks might have taken place). And at any given time, it is possible that a door will shut right in front of your face, cutting you off from the rest of the sentences that you haven’t read. In the worst case scenario, four walls will drop down from the ceiling, trapping you in a dark room with five sentences from which you cannot escape, no matter how hard you try.

Sound like fun?

This metaphor is not even that far fetched. By not using semantic HTML, your web application is basically like a soup of words and sentences, without structure and without navigability. Without navigability and without elements which are clearly interactive (e.g. a link or a button), most content will simply not be accessible for users of assistive technologies (like a door shut right in front of their face). And if we are messing around with focus without knowing what we are doing, we can move users to somewhere that they don’t want to be and don’t understand how to get out (like a booby trap that unexpectedly drops from the ceiling).

For a classic web application, e.g. a web application which consists primarily of content with a few interactive elements which can be implemented using links (<a>) or forms (<form>), we have absolutely no excuse: we should use semantic HTML elements everywhere.

To ensure that our app is accessible, we can test it using different assistive technologies and apply a few tips and tricks to improve the accessibility, but having semantic HTML as the basis will get us most of the way there.

I would say that an overwhelming majority of web applications fall in this category.

But what about those outliers? Those applications which really stretch the boundaries for what used to be possible in the web?

In the current climate of remote work we are always looking for new and great tools which allow us to be productive and work collaboratively.

In order to do this, we tried to recreate our physical workplaces in a digital setting.

We try to find web apps for digital whiteboarding, or apps that create a virtual event where we can move our avatar around and talk to different people. There is one issue here that we’ve completely overlooked: When recreating our physical workspaces on the web, we forgot that our physical workspaces are also not really accessible for many different people.

Whiteboards are not accessible for people who have poor eyesight, or who have motor disabilities. Event venues and parties may be difficult for people who cannot see, hear, or who are easily overstimulated by too much noise or motion.

When we transfer these experiences onto the internet, can we then be surprised when the applications that we create are also not accessible?

I’m not surprised, but I am saddened.

In many well designed tools, I am quite impressed with the different solutions that teams have come up with. We have really pushed the boundaries of what is possible. However, I feel like these solutions have achieved a clean interface design while also sacrificing HTML, the skeleton of the web.

We are missing out on an opportunity to create tools for an under-served market (the number of users of assistive technologies is huge). But we are also missing out on the opportunity to work on challenging problems that are interesting and could really truly help people.

Just think about it for a minute.

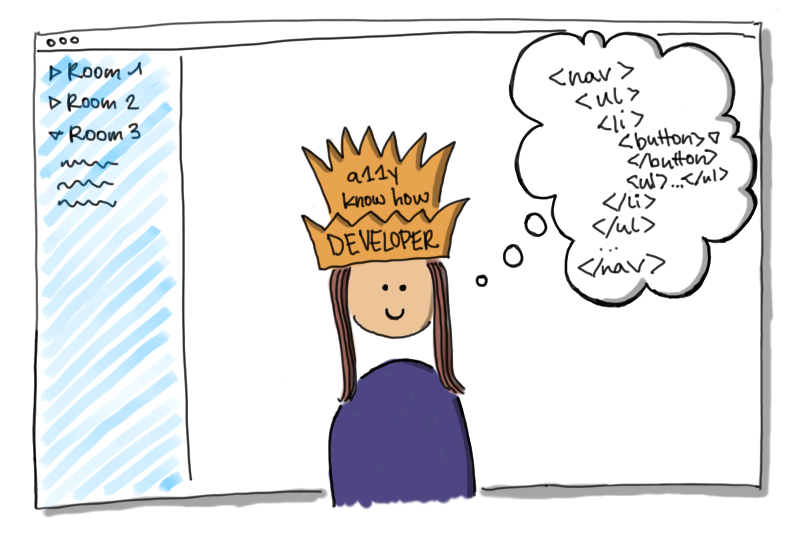

It is true that there is no exact semantic HTML element for everything that we would ever want to visualizes in our UI. But if we are trying to think outside of the box anyway, why can’t we think outside of the box here as well?

If we were to build an app for a virtual event where we can move an avatar around a virtual room and talk with colleagues, why couldn’t we try to use modern technology to automatically generate closed captions for users who cannot hear (or cannot hear well due to their current situation or surroundings)? It might be difficult for some users to move through the virtual room using a mouse, so why couldn’t we make sure that users can move their avatar using their keyboard and additionally provide a navigation element (<nav>) that would allow the users to view a list of other users in the room and navigate directly to them to talk to them?

Let’s also consider the example of the digital whiteboard. Traditionally, working on a whiteboard is not very accessible for a lot of people,

but if we were creating a digital whiteboard where all of the text is already digital, we would have the opportunity to present that information in a form which would be accessible for screenreaders and other assistive technologies. If we were visually grouping different notes on a pinboard inside of our app, might we not actually have an unordered list (<ul>) of text elements that we simply style and position differently using CSS? If we have different pinboards in our app with different names, why don’t we use different sections (<section>) which each have a heading element (<h2> - <h6>) so that they can be accessed from the accessibility tree, even if we do end up positioning them absolutely on an unlimited canvas? I’m not sure how you could best translate information about the visual proximity of information on a digital whiteboard (i.e. what other information is near what I am currently looking at) into a format which would be accessible, but isn’t it an interesting problem to think about?

There are a lot of fascinating problems out there.

I just wish more of us were working on them.